Hi there! 👋 I am Yaxin Luo.

About Me

Decorative animated background

Hello! I am a First-Year Machine Learning PhD student at MBZUAI, advised by Prof. Zhiqiang Shen. I am also closely working with my friend Xiaofu Chen. Ambitiously, my vision is to build reliable,scalable,real-world application computer/device use agent systems. We organize MetaAgentX to conduct a serial of research projects on these. Currently, my research focus on advancing Native Multimodal Foundation Models that unify understanding, generation, reasoning, planning, and action across diverse modalities.

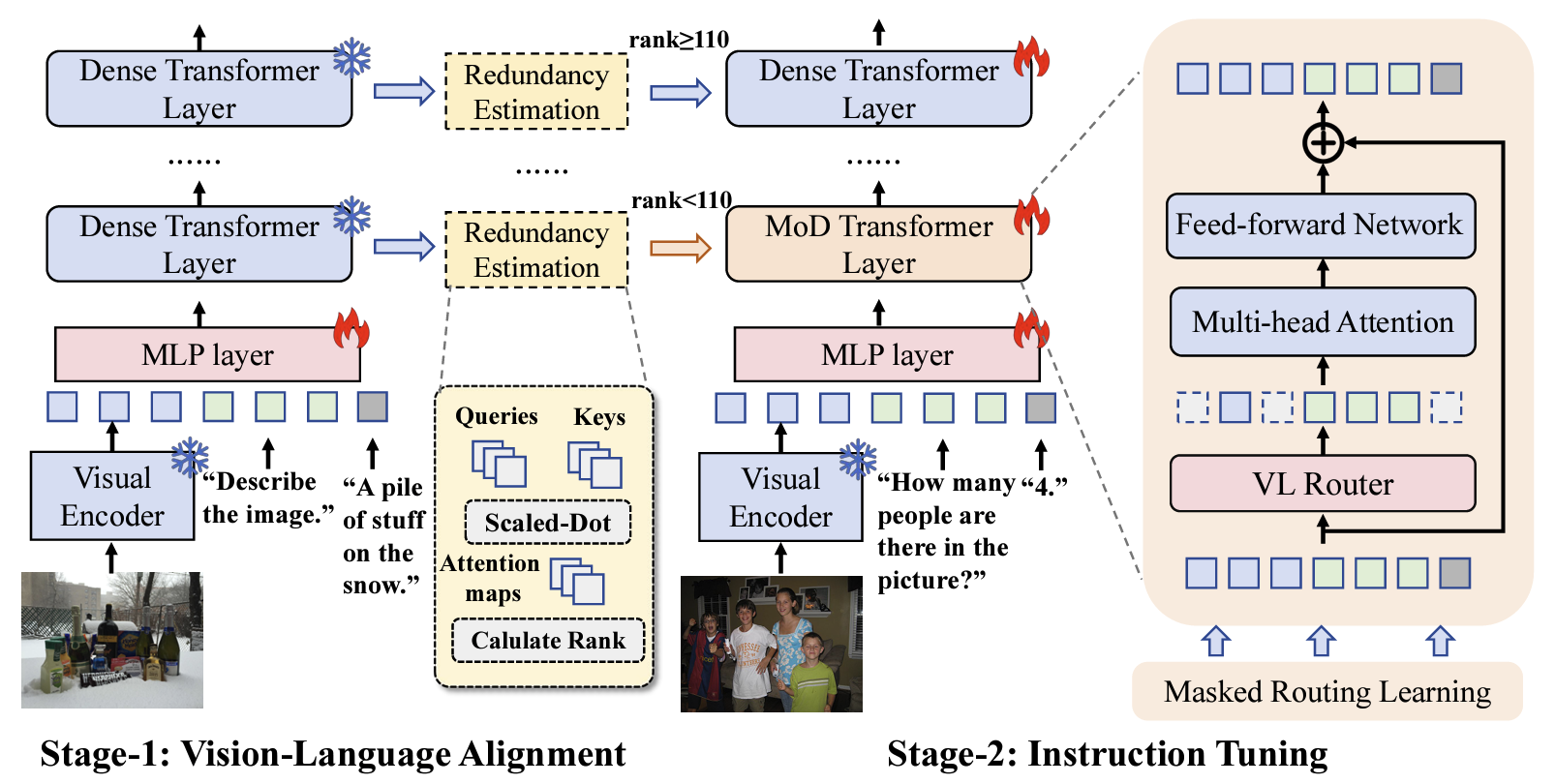

Previously, I earned my Bachelor’s degree from Technical University of Denmark, where I was fortunate to be supervised by Prof. Dim P. Papadopoulos. Meanwhile, I was lucky to be collaborating with Dr.Gen Luo and Prof.Rongrong Ji on efficient deep learning research during my bachelor.

More about my earlier journey...

I spent an intense and rewarding year at the University of Edinburgh studying pure mathematics and physics—an experience that sparked my passion for science and technology, deepened my curiosity about the unknown, I was curious and wanted to explore String Theory at that time, this one year ultimately shaped who I am today. Before Edinburgh, while enrolled in a Bio-Medicine program at the University of Queensland and preparing for the UCAT test to be admitted into the university's medical school, I failed at the end. As I only focused on managing a high-street multi-brand boutique which was located in Brisbane's Southbank near the casino, and was far more focused on business than on study and research; that Edinburgh year changed my priorities and set me on a research path, thanks to the advice, encouragement and support of my academic personal tutor Prof.Ana Rita Pires when I was at Edinburgh. Anyway, all those past experiences have made me who I am today.

My research interests focus on:

- Unified Multimodal Foundation Models : Developing native multimodal foundation models that perform unified understanding, generation, reasoning, planning and action across multi-modalities. I aim to construct a universal interface where diverse modalities converge, enabling models to perceive complex real-world dynamics and generate coherent, high-fidelity multimodal contents.

- To build reliable,scalable,real-world application computer/device use agent systems.

Recently, I am focusing on long-horizon and editiable design agentic foundation model and system.

Experience

Research Intern, Meituan LongCat Team

Working on Unified Multimodal Foundation Model Projects(LongCat-Next Team).

- Long-Horizon Multimodal Interactive Tasks for Unified Multimodal Models: Agentic Design System.

- Unified Discrete Vision Encoder for both understanding and generation.

Research Assistant, MBZUAI

Advised by Prof. Zhiqiang Shen at the VILA Lab.

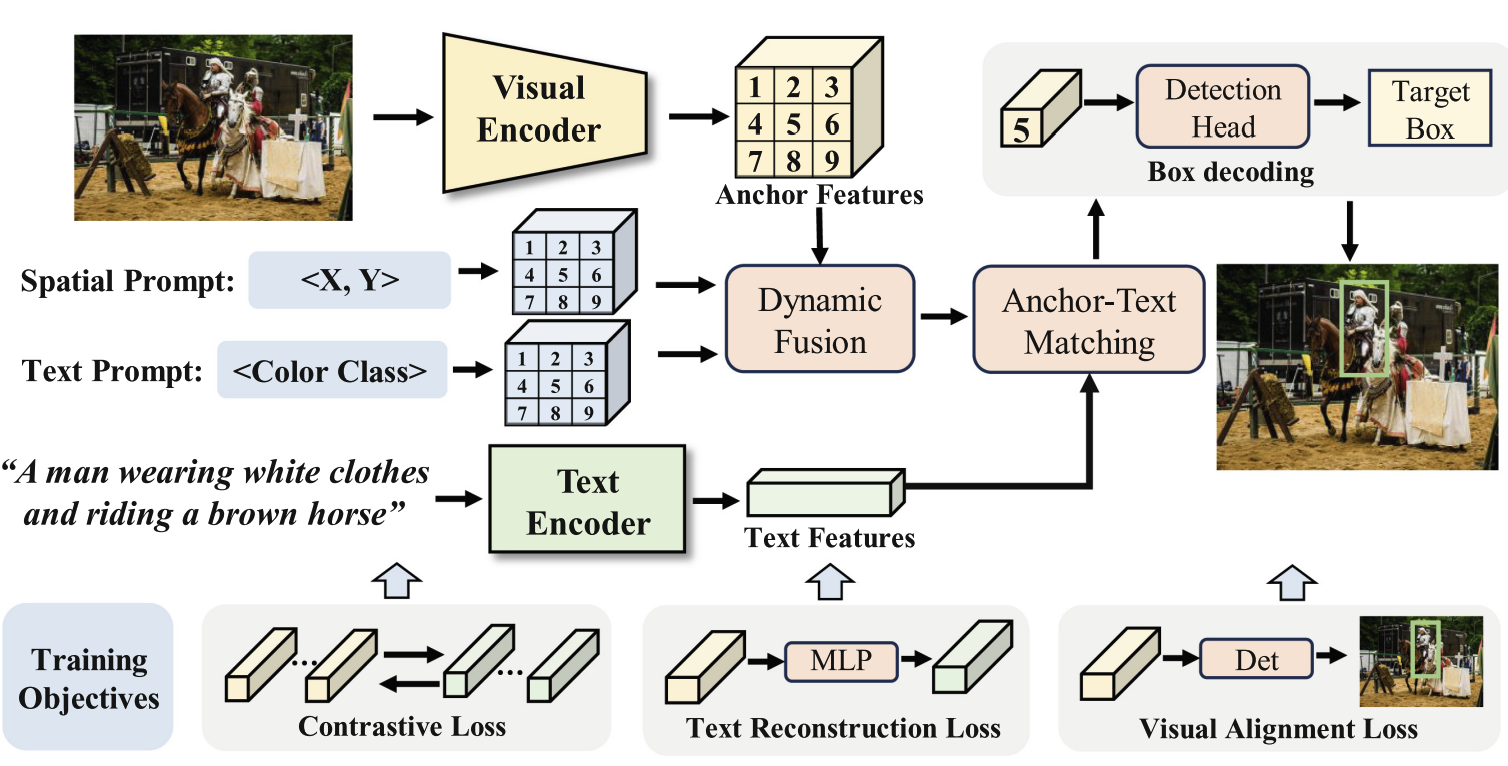

- Investigated language-pretraining-induced bias as a strong foundation for general vision tasks, showing LLM priors transfer to pure-vision learning — published in TMLR 2026.

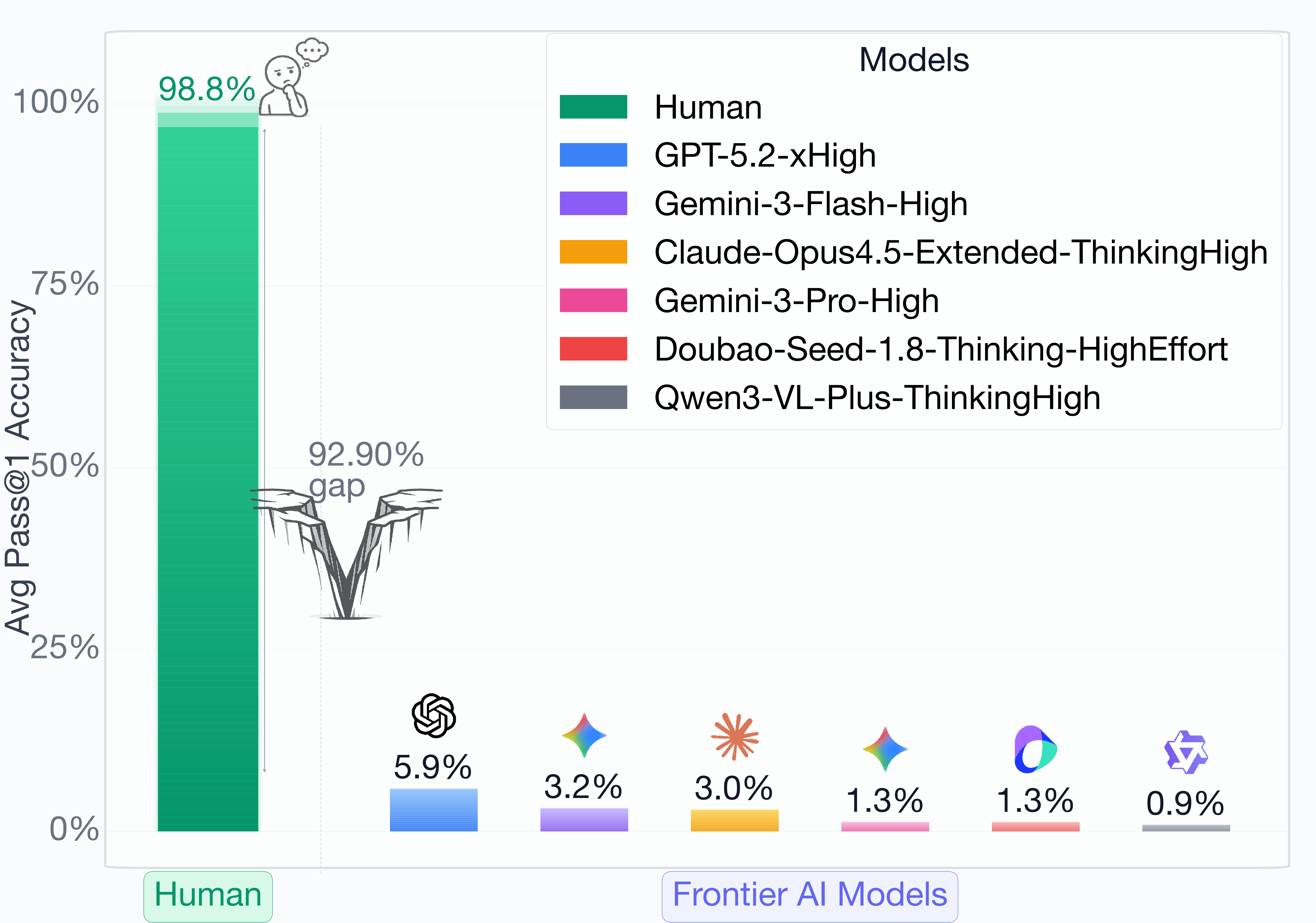

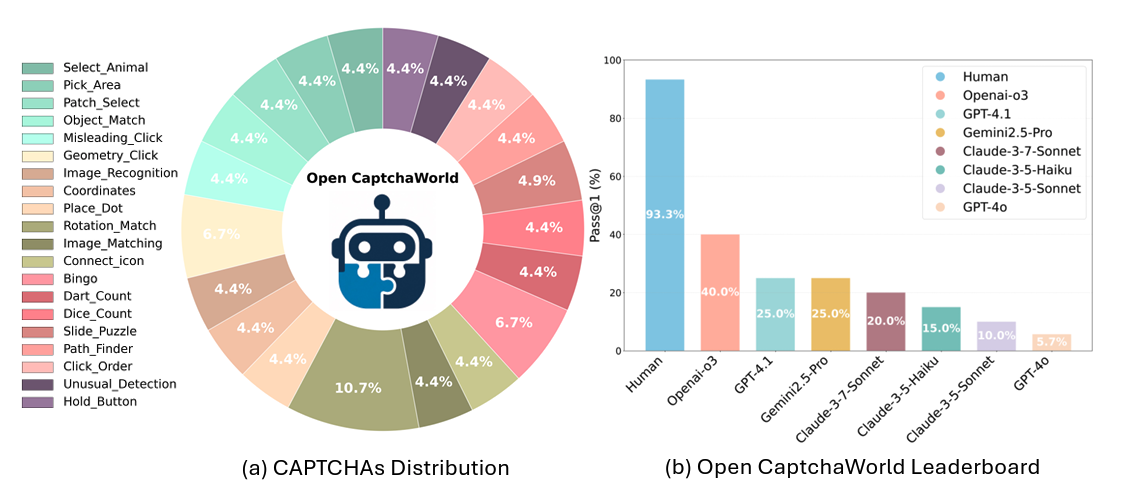

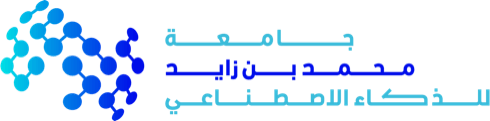

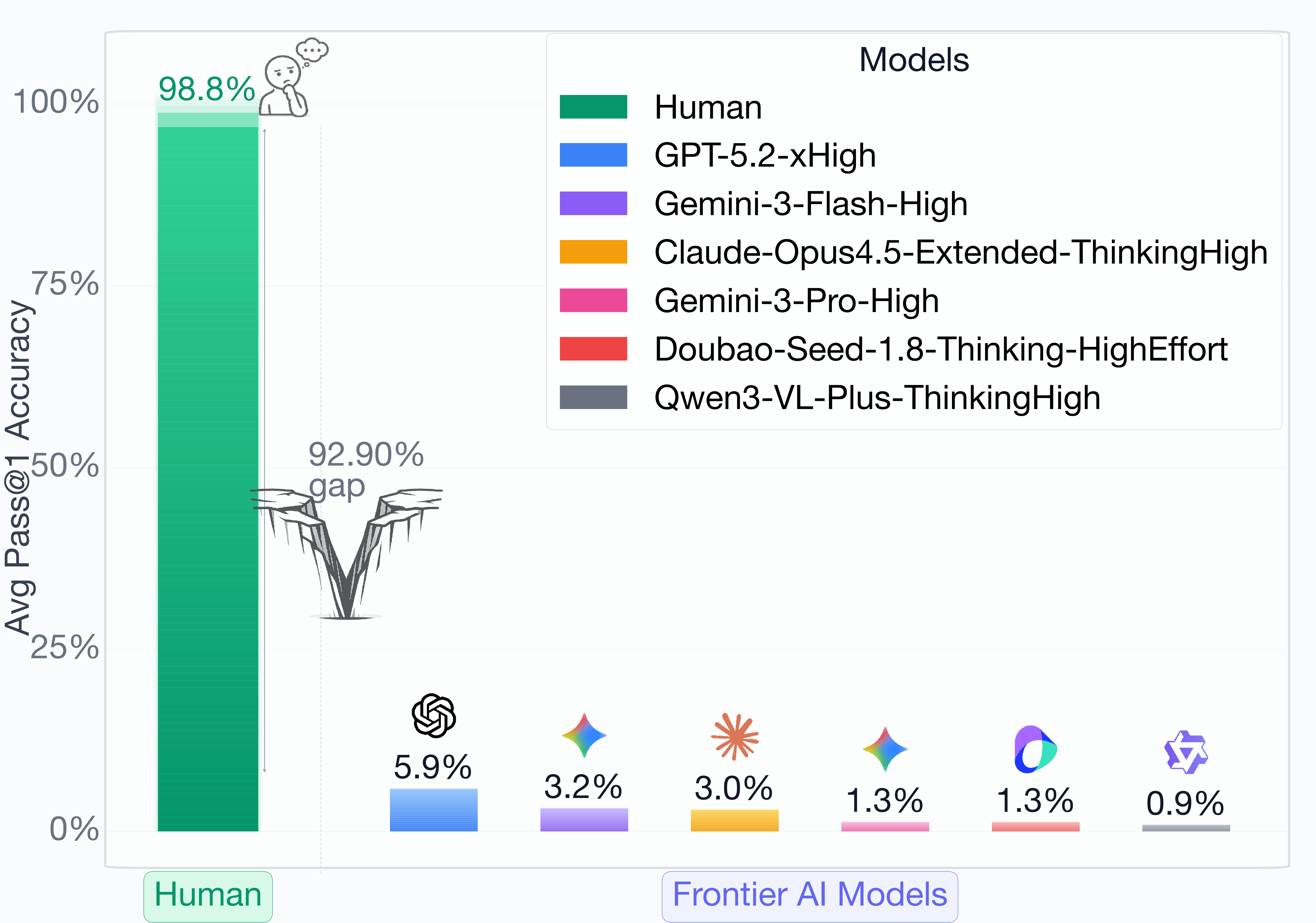

- Explored reasoning and agentic behaviors in multimodal large language models (MLLMs), leading the OpenCaptchaWorld benchmark (NeurIPS 2025).

News

[2026-02-10] 🚀 Next-Gen CAPTCHAs is now available on arXiv! A defense framework leveraging cognitive gaps against MLLM-based GUI agents.

[2025-09-18] 🚀 OpenCaptchaWorld has been accepted by NeurIPS 2025.

Selected Publications

( * indicate equal contribution)

For full and up-to-date publication list, please refer to my Google Scholar page.